Capturing Intelligence at the Edge: Building Image Datasets with EdgeVision and ESP32-CAM

In the world of edge AI and TinyML, one of the biggest roadblocks is collecting and labeling high-quality data — especially when you're working with constrained hardware like the ESP32-CAM. That's where EdgeVision, a library from Consentium IoT, steps in.

It transforms your humble ESP32-CAM board into a full-fledged, web-controlled image capture and labeling studio, making it remarkably easy to generate datasets right from the device — no PC, Raspberry Pi, or cloud connection required.

🔍 What Is EdgeVision?

EdgeVision is a modular library and browser-based tool that allows developers, students, and ML practitioners to:

- Capture still images at fixed intervals via the ESP32-CAM

- Label data with ZIP filenames in-browser

- Bundle and download image datasets as ZIP archives

- Use the data for training or real-time ML inference

Built for simplicity and speed, it turns the ESP32 into a mini data station for collecting real-world images — perfect for object detection, pose recognition, and environment monitoring.

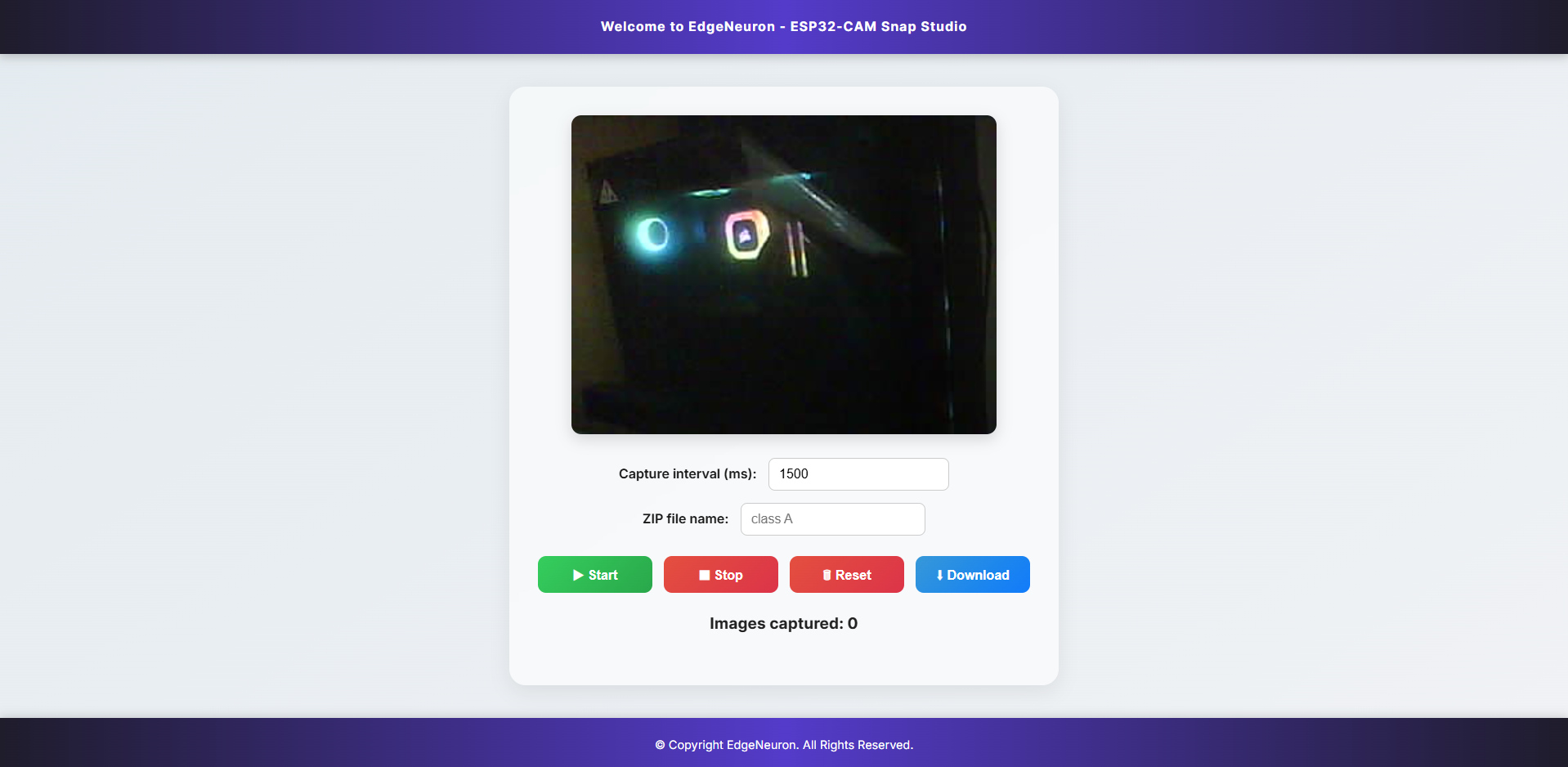

🖼️ What the Interface Looks Like

Once the firmware is flashed and the device connected to your network, EdgeVision serves a live video stream and capture interface in the browser:

You can:

- 🎥 View the live camera feed

- ⏱️ Set a capture interval (e.g., every 1500 ms)

- 🏷️ Enter a ZIP filename as the label (e.g., "Class A", "Fall", "Walking")

- 🟢 Click Start to begin snapping images

- 🔴 Stop, 🔁 Reset, and ⬇️ Download when you're done

Each image is stored in memory and zipped — ready for machine learning workflows.

⚡ Getting Started in Minutes

🧩 Hardware Requirements

- ESP32-CAM module (AI Thinker or similar)

- Micro-USB programmer (with 5V and GND wired correctly)

- USB cable

- Optional: Battery or external 5V source for field capture

🔧 Installation via Arduino Library Manager

EdgeVision is officially available via the Arduino Library Manager, making it easy to install.

- Open Arduino IDE

- Go to Tools → Manage Libraries

- Search for

EdgeSense - Click Install

After installation, go to:

File → Examples → EdgeVision → CameraStreaming

Flash the sketch to your ESP32-CAM board. Open Serial Monitor to get the IP address and access the web interface.

🧠 Let’s Understand the Code

Let’s walk through the default CameraStreaming.ino sketch. It’s less than 30 lines of code, but does a lot behind the scenes.

1. Include the Library and Set Wi-Fi

#include <EdgeVision.h>

const char* ssid = "YOUR_WIFI_SSID";

const char* password = "YOUR_WIFI_PASSWORD";

EdgeVision cam;

This initializes the EdgeVision library and sets up your Wi-Fi credentials. The cam object controls everything: Wi-Fi, camera, and HTTP server.

2. Setup: Connect, Configure, Serve

void setup() {

Serial.begin(115200);

cam.initWiFi(ssid, password);

cam.initCamera();

cam.startCameraServer();

Serial.println("Camera server started.");

}

cam.initWiFi(...): Connects your ESP32 to your local Wi-Fi networkcam.initCamera(): Initializes the OV2640 camera (resolution, buffers, pins)cam.startCameraServer(): Starts an HTTP MJPEG server for live video + control

When this finishes, your ESP32 is ready to stream and capture from the browser.

3. Loop: Keep the Server Alive

void loop() {

cam.keepServerAlive();

}

This non-blocking function ensures the internal web server continues handling requests and streaming without crashing.

🧠 Why EdgeVision Matters

Let’s be real: collecting clean, labeled data is a pain.

EdgeVision simplifies the most tedious part of your ML pipeline — especially when you're targeting embedded or offline devices.

Here’s why it matters:

1. 📊 Collect Real-World Data

Whether you're capturing gestures, motion patterns, object shapes, or lighting changes, collecting authentic, device-native data is critical. Training your model on what the sensor actually sees boosts real-world accuracy.

2. 🏷️ Label as You Capture

Instead of moving files, renaming folders, and batching images manually, EdgeVision labels the dataset automatically based on the ZIP name you provide. One ZIP = One class = Zero post-processing.

3. 🧠 Train Better TinyML Models

The tool integrates beautifully with EdgeModelKit — a Python library from Consentium IoT designed to preprocess, structure, and train ML models on the data captured by EdgeVision.

🌍 Use Cases in the Wild

- 🤸 Activity Classification: Standing, walking, falling — perfect for health monitoring

- 🧪 STEM Education: Let students build datasets and deploy models themselves

- 🌱 Smart Agriculture: Detect pests, rot, or leaf shapes in the field

- 🏗️ Industrial Monitoring: Log visual anomalies without sending full video

- 🐶 Pet Recognition: Teach your ESP32 to tell the difference between a cat and a dog

All of this, without an external computer in the loop.

⚙️ Under the Hood

EdgeVision uses:

- The ESP32-CAM's OV2640 camera module for image capture

- MJPEG streaming via a lightweight HTTP server

- Timed snapshots with customizable interval control

- SPIFFS or RAM to temporarily store and package images

- JavaScript on the frontend to manage UI and ZIP creation

It’s optimized to run entirely offline on the local network, ideal for bandwidth-constrained environments.

🧰 Pro Tips

- For better image quality, use natural lighting or add IR LEDs for night vision.

- Capture images from multiple angles and lighting conditions to improve dataset diversity.

- Use a tripod or mount to keep the camera fixed while collecting.

- Run multiple ESP32-CAMs with different class labels on different devices to parallelize data capture.

📘 Learn More

- 📦 GitHub Repo: ConsentiumIoT/EdgeVision

- 🔬 Dataset Training Toolkit: EdgeModelKit

- 🧠 Tutorials & Docs: https://docs.consentiumiot.com

📫 Get in Touch

Questions or suggestions?

📬 Email: [email protected]

🌐 Website: https://consentiumiot.com

EdgeVision is open-source and MIT licensed. It’s part of a growing suite of edge AI tools developed by Consentium IoT Labs — powering smarter sensing, right where the data is born.